📚 Table of Contents

- The AI Product Revolution Is Here

- What Makes AI Products Different

- Understanding LLMs: A PM’s Practical Guide

- RAG Explained: Making LLMs Smarter

- The AI Product Development Lifecycle

- Metrics for AI Products: Beyond Traditional KPIs

- Working with ML Engineers: Communication That Works

- AI Ethics and Responsible AI for PMs

- Tools and Platforms for AI Product Development

- Getting Started in AI Product Management

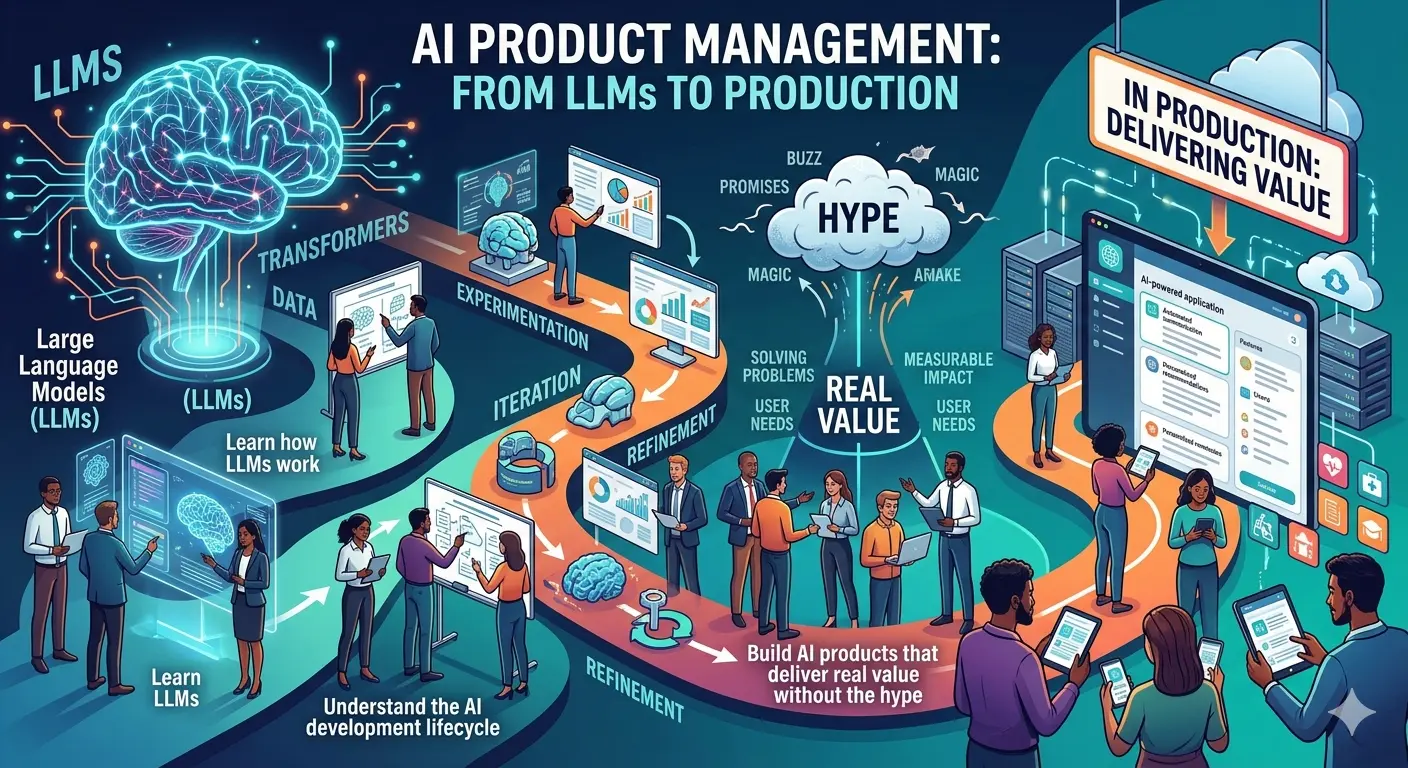

The AI Product Revolution Is Here

In 2023, I watched a product team spend six months building an AI feature. They hired expensive ML engineers, trained custom models, and invested heavily in infrastructure. When they launched, users… didn’t care. The feature worked technically but solved a problem nobody had.

Six months later, another team I worked with built an AI feature in two weeks using an LLM API. They launched quickly, learned from user behavior, and iterated. Their feature became a core part of the product, driving significant user engagement and revenue.

The difference wasn’t technology. It was product thinking.

AI product management isn’t about understanding neural network architecture or gradient descent. It’s about understanding what AI can do, what it can’t do, and how to bridge the gap between technical capability and user value.

This guide will give you that understanding.

The AI Opportunity for PMs

The AI market is exploding. Every company is asking: “How do we add AI to our product?” But most AI features fail. Not because the technology doesn’t work, but because the product thinking is wrong.

You have an opportunity. While everyone else is chasing AI hype, you can build AI products that actually deliver value. This guide will show you how.

What Makes AI Products Different

AI products aren’t just software products with AI bolted on. They require fundamentally different thinking.

The Five Key Differences

1. Probabilistic, Not Deterministic

Traditional software: Same input → Same output. Always. AI software: Same input → Different outputs sometimes. That’s the nature of probability.

Traditional Software:

User clicks "Submit" → Form submits → Success message

(100% predictable behavior)

AI Software:

User asks "Summarize this document" →

Sometimes: Great summary

Sometimes: Mediocre summary

Sometimes: Completely wrong summary

(Variable quality, need to handle all cases)

Product implication: You need to design for variability. How do you handle when the AI gets it wrong? How do you set user expectations? How do you measure quality?

2. Data Is the Product

In traditional software, you design features and implement them. In AI software, your data determines what’s possible.

Traditional: "Build a spam filter" → Engineer implements rules

AI: "Build a spam filter" → "What training data do we have?"

Product implication: Data strategy is product strategy. What data do you need? How do you get it? How do you maintain quality?

3. Improvement Requires Iteration

Traditional software: Launch → Fix bugs → Done AI software: Launch → Collect data → Retrain → Improve → Repeat

Product implication: AI products are never “done.” They require ongoing iteration, measurement, and improvement cycles.

4. Edge Cases Are the Norm

Traditional software: Handle happy path, edge cases are exceptions AI software: Edge cases are everywhere, and they’re hard to predict

Product implication: You need robust monitoring, user feedback loops, and graceful degradation.

5. Cost Structure Is Different

Traditional software: Fixed development cost, marginal cost near zero AI software: Fixed development cost PLUS ongoing inference costs

Product implication: Every AI interaction has a cost. You need to think about unit economics from day one.

The AI Product Framework

┌─────────────────────────────────────────────────────────────┐

│ AI PRODUCT DECISION TREE │

├─────────────────────────────────────────────────────────────┤

│ │

│ Does this problem actually need AI? │

│ ├── NO → Use traditional software │

│ └── YES → Continue │

│ │

│ Do you have the data to train/evaluate? │

│ ├── NO → Start with data collection strategy │

│ └── YES → Continue │

│ │

│ Can you tolerate probabilistic outputs? │

│ ├── NO → Consider hybrid (AI + rules) or traditional │

│ └── YES → Continue │

│ │

│ Can you afford ongoing inference costs? │

│ ├── NO → Optimize or reconsider │

│ └── YES → Continue │

│ │

│ Do you have a feedback loop for improvement? │

│ ├── NO → Build one before launching │

│ └── YES → Build and iterate │

│ │

└─────────────────────────────────────────────────────────────┘

Understanding LLMs: A PM’s Practical Guide

Large Language Models (LLMs) have transformed AI product development. Understanding how they work—and don’t work—is essential.

What LLMs Actually Do

LLMs are pattern-matching engines trained on massive amounts of text. They predict the next most likely word (token) based on context.

Input: "The cat sat on the ___"

LLM prediction: "mat" (most likely), "floor" (less likely), "cloud" (unlikely)

It's not thinking. It's statistically predicting.

The key insight: LLMs don’t “understand” anything. They generate plausible text based on patterns. Sometimes that text is exactly right. Sometimes it’s confidently wrong.

LLM Capabilities (What They’re Good At)

| Capability | Example | Confidence |

|---|---|---|

| Text generation | Writing drafts, summaries, variations | High |

| Transformation | Translation, reformatting, style changes | High |

| Extraction | Pulling structured data from unstructured text | Medium-High |

| Classification | Categorizing text into predefined buckets | Medium-High |

| Question answering | Answering questions from provided context | Medium |

| Reasoning | Multi-step logic, math, analysis | Low-Medium |

| Factual recall | Answering questions about the world | Low (often hallucinates) |

LLM Limitations (What They Struggle With)

1. Hallucination

LLMs generate plausible-sounding but factually incorrect information.

User: "Who won the Super Bowl in 2035?"

LLM: "The Kansas City Chiefs defeated the San Francisco 49ers, 31-20."

Problem: 2035 hasn't happened. The LLM invented a plausible-sounding answer.

Mitigation: Use RAG (see below), ground responses in provided context, display confidence levels.

2. Context Length Limits

LLMs can only process a limited amount of text at once.

GPT-4: ~8,000-128,000 tokens (roughly 6,000-96,000 words)

Claude: Up to 200,000 tokens

Gemini: Up to 1,000,000 tokens (for some models)

Product implication: You can't feed an entire knowledge base into one prompt.

Mitigation: Use RAG to retrieve relevant chunks, summarize before processing, design for token limits.

3. Lack of True Understanding

LLMs pattern-match but don’t reason like humans.

LLM: "I understand your concern."

Reality: It's generating a pattern-matched response. It doesn't actually "understand" anything.

Product implication: Don't anthropomorphize. Design for what LLMs actually do.

4. Inconsistency

The same input can produce different outputs.

Input: "Write a haiku about debugging"

Output 1: "Bugs hide in the code / Searching through lines of logic / Found it, now it works"

Output 2: "Error messages / The compiler points at lines / Still broken somehow"

Product implication: Set user expectations, provide regeneration options, test with multiple runs.

LLM Product Decisions

When building with LLMs, you’ll make these decisions:

Model Selection:

- Open source (Llama, Mistral) vs. commercial (GPT-4, Claude)

- Trade-offs: Cost, capability, data privacy, latency

Prompt Engineering:

- How you phrase inputs dramatically affects outputs

- Iterative experimentation is essential

- Prompt templates become product code

Temperature and Parameters:

- Temperature: Controls randomness (0 = deterministic, 1 = creative)

- Lower for factual tasks, higher for creative tasks

Cost Management:

- Token-based pricing means every interaction costs money

- Caching, batching, and model selection affect costs significantly

RAG Explained: Making LLMs Smarter

Retrieval-Augmented Generation (RAG) is the technique that makes LLMs useful for real products. It solves the hallucination problem by grounding LLM responses in real data.

How RAG Works

┌─────────────────────────────────────────────────────────────┐

│ RAG ARCHITECTURE │

├─────────────────────────────────────────────────────────────┤

│ │

│ 1. USER QUERY │

│ "What's our refund policy for enterprise customers?" │

│ │ │

│ ▼ │

│ 2. EMBEDDING │

│ Convert query to vector representation │

│ │ │

│ ▼ │

│ 3. RETRIEVAL │

│ Search knowledge base for relevant documents │

│ [Refund Policy Doc, Enterprise Terms Doc, ...] │

│ │ │

│ ▼ │

│ 4. AUGMENTATION │

│ Combine query + retrieved documents into prompt │

│ │ │

│ ▼ │

│ 5. GENERATION │

│ LLM generates response grounded in retrieved context │

│ │ │

│ ▼ │

│ 6. OUTPUT │

│ "Enterprise customers can request refunds within 90 │

│ days. The process involves contacting your account │

│ manager or submitting through the enterprise portal." │

│ │

└─────────────────────────────────────────────────────────────┘

Why RAG Matters for PMs

RAG transforms LLMs from chatbots that make things up to systems that answer questions based on your actual data.

Without RAG:

User: "What's our refund policy?"

LLM: "Most companies offer 30-day refunds..." (generic, possibly wrong)

With RAG:

User: "What's our refund policy?"

LLM: "Based on our policy document, you can request a refund within

60 days of purchase by contacting support@company.com or

through your account settings..." (specific to your company)

RAG Components Every PM Should Know

| Component | What It Does | Product Implications |

|---|---|---|

| Document Store | Where your knowledge lives | What content do you include? How do you keep it updated? |

| Embedding Model | Converts text to vectors | Affects retrieval quality |

| Vector Database | Stores embeddings for search | Scale, cost, latency considerations |

| Retriever | Finds relevant documents | How many documents to retrieve? How to rank relevance? |

| LLM | Generates the response | Model selection, prompt engineering |

RAG Product Decisions

What content goes in your knowledge base?

- Product documentation, FAQs, policies, past tickets?

- How do you handle conflicting information?

- How do you keep it updated?

How do you handle missing information?

- “I don’t know” vs. best-effort answer

- Escalation to human support

- Feedback loop to identify gaps

How do you measure quality?

- Retrieval quality: Did we find the right documents?

- Generation quality: Did the LLM use them correctly?

- User satisfaction: Did the answer help?

The AI Product Development Lifecycle

AI products follow a different development lifecycle than traditional software.

The AI Lifecycle vs. Traditional Lifecycle

TRADITIONAL SOFTWARE:

──────────────────────────────────────────────────────────────

Requirements → Design → Build → Test → Deploy → Maintain

AI SOFTWARE:

──────────────────────────────────────────────────────────────

Problem → Data → Model → Evaluate → Deploy → Monitor → Improve

↑ │

└──────────────────── Feedback Loop ─────────────────────┘

Stage 1: Problem Definition

The most important stage. Many AI projects fail because they solve the wrong problem.

Questions to answer:

- What user problem are we solving?

- Is AI the right solution, or is traditional software better?

- What does “good” look like? How will we measure success?

Common pitfalls:

- Using AI because it’s trendy, not because it’s needed

- Vague success criteria

- Underestimating edge cases

Stage 2: Data Strategy

AI is only as good as its data.

Questions to answer:

- What data do we need?

- Do we have it? If not, how do we get it?

- Is the data quality sufficient?

- Are there privacy/legal considerations?

Data requirements vary by approach:

| Approach | Data Needed | Timeline |

|---|---|---|

| Use pre-trained LLM | None or minimal examples | Days to weeks |

| Fine-tune existing model | Hundreds to thousands of examples | Weeks |

| Train custom model | Millions of examples | Months |

Stage 3: Model Development

This is where ML engineers do their work. As a PM, your role is:

- Prioritizing requirements

- Providing domain knowledge

- Defining evaluation criteria

- Making trade-off decisions

Key trade-offs:

- Accuracy vs. latency

- Model size vs. cost

- Specialization vs. generalization

Stage 4: Evaluation

How do you know if your AI is working?

Evaluation methods:

| Method | What It Measures | When to Use |

|---|---|---|

| Offline metrics | Model performance on test data | During development |

| Human evaluation | Expert review of outputs | Before launch, periodically |

| A/B testing | User behavior comparison | After launch |

| User feedback | Direct user ratings | Ongoing |

| Business metrics | Revenue, retention, support tickets | Ongoing |

Stage 5: Deployment

AI deployment has unique considerations:

A/B testing: Gradual rollout to manage risk Shadow mode: Run AI alongside existing process, compare results Canary deployment: Small percentage of users first Monitoring: Real-time alerts for quality degradation

Stage 6: Monitoring and Improvement

AI products degrade over time without attention.

Things to monitor:

- Model drift: Input data changing over time

- Concept drift: User expectations changing

- Performance: Latency, error rates

- Business impact: User satisfaction, cost

The improvement cycle:

- Monitor performance

- Identify problems

- Collect new data

- Retrain or adjust

- Evaluate changes

- Deploy improvements

- Repeat

Metrics for AI Products: Beyond Traditional KPIs

Traditional product metrics (DAU, conversion, retention) still matter. But AI products need additional metrics.

AI-Specific Metrics

Quality Metrics:

| Metric | What It Measures | How to Track |

|---|---|---|

| Accuracy | Correctness of predictions | Manual review, ground truth comparison |

| Precision | Of positive predictions, how many are correct | Evaluation dataset |

| Recall | Of actual positives, how many did we catch | Evaluation dataset |

| F1 Score | Balance of precision and recall | Evaluation dataset |

| User satisfaction | Did the user find the answer helpful? | Explicit ratings, implicit signals |

Operational Metrics:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Latency | Time to generate response | User experience |

| Token usage | Input/output tokens per request | Cost |

| Error rate | Failed requests percentage | Reliability |

| Cache hit rate | Requests served from cache | Cost, latency |

Business Metrics:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Cost per query | Inference cost per interaction | Unit economics |

| Support deflection | Queries that don’t escalate to humans | ROI |

| Time to resolution | How fast users get answers | User experience |

| Feature adoption | Usage of AI features | Product-market fit |

The AI Metrics Dashboard

┌─────────────────────────────────────────────────────────────┐

│ AI PRODUCT DASHBOARD │

├─────────────────────────────────────────────────────────────┤

│ │

│ QUALITY OPERATIONAL │

│ ├── Accuracy: 87% ├── Avg Latency: 2.3s │

│ ├── User Satisfaction: 4.2 ├── Error Rate: 0.3% │

│ ├── Hallucination Rate: 4% └── Cache Hit: 45% │

│ │

│ BUSINESS COSTS │

│ ├── Queries/Day: 12,450 ├── Daily Spend: $234 │

│ ├── Support Deflection: 34% ├── Cost/Query: $0.019 │

│ └── Feature Adoption: 28% └── Monthly: $7,020 │

│ │

└─────────────────────────────────────────────────────────────┘

Working with ML Engineers: Communication That Works

ML engineers think differently than software engineers. Understanding their concerns helps you collaborate effectively.

What ML Engineers Care About

Data quality over quantity: “Garbage in, garbage out” is especially true for ML

Reproducibility: Can we recreate this result?

Evaluation: How do we know this works?

Edge cases: The long tail of weird inputs

Model performance: Accuracy, latency, resource usage

Technical debt: ML systems accumulate debt quickly

Questions ML Engineers Wish PMs Would Ask

Instead of: “Can you build this?” Ask: “What data would we need to build this? Do we have it?”

Instead of: “How accurate is the model?” Ask: “How are we measuring accuracy? What are we missing?”

Instead of: “Why is this taking so long?” Ask: “What’s the biggest uncertainty or blocker right now?”

Instead of: “Just ship it and we’ll fix later.” Ask: “What’s the minimum viable model quality we can ship with?”

Common ML Engineering Terms for PMs

| Term | What It Means | Product Relevance |

|---|---|---|

| Training | Teaching the model from data | Takes time, needs data |

| Inference | Using the model for predictions | Every user interaction |

| Fine-tuning | Adapting a pre-trained model | Faster than training from scratch |

| Hyperparameters | Model configuration settings | Affects performance |

| Overfitting | Model memorizes training data | Works on tests, fails in production |

| Underfitting | Model too simple | Doesn’t capture patterns |

AI Ethics and Responsible AI for PMs

AI products have ethical implications. PMs need to think about these from the start.

Key Ethical Considerations

Bias and Fairness

AI systems can perpetuate or amplify biases in training data.

Example: A resume screening AI trained on historical hiring data

might learn to favor candidates similar to past hires, perpetuating

historical discrimination.

What to do:

- Audit training data for bias

- Test model outputs across different demographic groups

- Monitor for biased outcomes in production

Privacy

AI often requires large amounts of data, raising privacy concerns.

What to do:

- Minimize data collection

- Anonymize where possible

- Be transparent about data use

- Follow regulations (GDPR, CCPA, etc.)

Transparency

Users should understand when they’re interacting with AI.

What to do:

- Disclose AI use clearly

- Explain limitations

- Provide recourse when AI makes mistakes

Accountability

When AI makes mistakes, who’s responsible?

What to do:

- Maintain human oversight for high-stakes decisions

- Create clear escalation paths

- Document decision-making processes

Responsible AI Checklist

Before Launch:

☐ Have we tested for bias across different user groups?

☐ Are we being transparent about AI use?

☐ Do we have human oversight for high-stakes decisions?

☐ Can users appeal or correct AI decisions?

☐ Are we complying with relevant regulations?

☐ Do we have monitoring for harmful outputs?

☐ Can we explain how the AI made a decision?

☐ Is there a way to shut it down quickly if needed?

Tools and Platforms for AI Product Development

You don’t need to build everything from scratch. The AI ecosystem has matured significantly.

LLM Providers

| Provider | Models | Best For |

|---|---|---|

| OpenAI | GPT-4, GPT-3.5 | General purpose, widely adopted |

| Anthropic | Claude | Long context, safety-focused |

| Gemini | Multimodal, Google ecosystem | |

| Meta | Llama (open source) | Customization, privacy |

| Mistral | Mistral, Mixtral | Open source, efficient |

Vector Databases (for RAG)

| Database | Best For |

|---|---|

| Pinecone | Managed, easy to start |

| Weaviate | Hybrid search, open source option |

| Chroma | Simple, Python-native |

| Qdrant | High performance, open source |

AI Development Platforms

| Platform | What It Does |

|---|---|

| LangChain | LLM application framework |

| LlamaIndex | Data framework for LLMs |

| Hugging Face | Model hub, open source community |

| Weights & Biases | ML experiment tracking |

| Scale AI | Data labeling, RLHF |

Getting Started in AI Product Management

Here’s a practical path to build your AI PM skills.

Week 1-2: Foundation

- Try ChatGPT, Claude, and Gemini. Understand what they do well and poorly.

- Build something simple: a chatbot, a summarizer, a classifier

- Read about LLM limitations (hallucination, context limits)

- Understand token-based pricing

Week 3-4: RAG and Applications

- Build a simple RAG system (many tutorials available)

- Understand embeddings and vector search

- Learn about prompt engineering

- Study real AI products and how they work

Week 5-6: Production Considerations

- Learn about AI evaluation methods

- Understand AI cost structures

- Study AI ethics and responsible AI

- Research AI regulations relevant to your industry

Week 7-8: Practical Application

- Identify an AI opportunity in your current product

- Write a problem statement and success criteria

- Create a data strategy

- Present to your team for feedback

Ongoing Learning

- Follow AI researchers and practitioners on social media

- Try new models and tools as they’re released

- Join AI PM communities

- Read papers and articles on AI applications in your industry

The Bottom Line

AI product management is the most important emerging discipline in product. The demand for PMs who understand AI is exploding, but the supply of truly skilled AI PMs is limited.

The opportunity is real. But it requires more than just enthusiasm about AI. It requires understanding what AI can and can’t do, how to build AI products that actually work, and how to navigate the unique challenges of probabilistic software.

You don’t need a PhD in machine learning. You need practical knowledge, good product instincts, and a willingness to keep learning as the field evolves.

Start today. Build something small. Learn from it. Then build something bigger.

About the Author

I’m Karthick Sivaraj, creator of The Naked PM. I’ve helped companies navigate the transition from traditional software products to AI-powered products. I’ve seen the successes and the failures—the teams that built transformative AI features and the teams that wasted months on AI hype.

My mission is to help product managers become the bridge between AI capability and user value. Because that’s where the real opportunity lies.

Connect with me on LinkedIn for weekly insights on AI product management, DevOps for PMs, and the practical skills that drive product success.

What AI feature are you considering building? Share in the comments, and let’s discuss whether it makes sense.

Related Reading:

💬 Join the Conversation