📚 Table of Contents

- The AI Feature That Went Wrong (And Why It’s Not the Model’s Fault)

- What AI Actually Is (And What It Isn’t)

- LLMs Demystified: The PM’s Mental Model

- RAG, Fine-Tuning, and When to Use What

- The AI Feature Development Process

- Specifying AI Features: The New Requirements

- AI Costs: Understanding the Token Economy

- Testing and Quality for AI Features

- Common AI Product Mistakes

- Building Your AI Product Muscles

- Questions to Ask Your AI/Engineering Team

- The Bottom Line

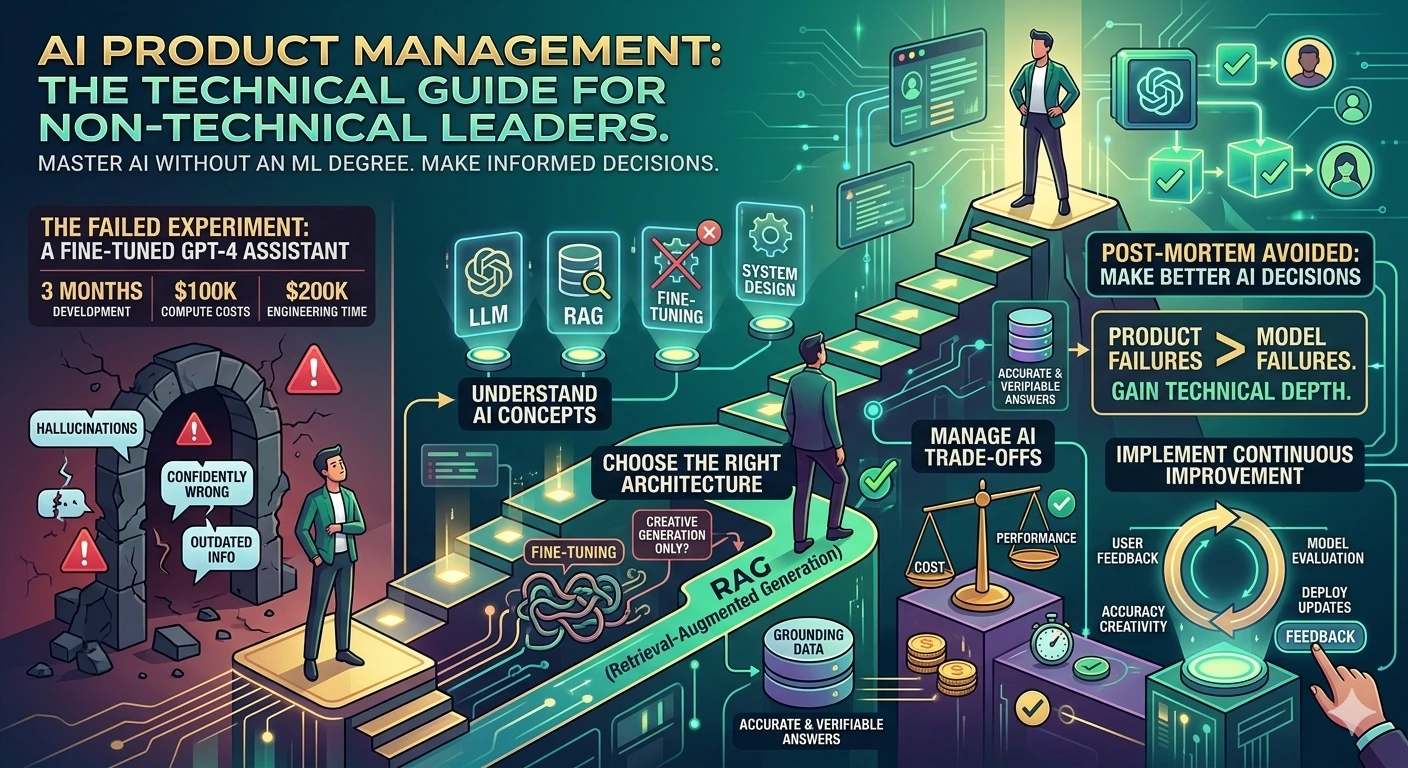

The AI Feature That Went Wrong (And Why It’s Not the Model’s Fault)

In 2024, I watched a company spend $300,000 building an AI feature that failed spectacularly.

The feature: An AI assistant that would answer customer support questions.

The approach:

- Fine-tuned GPT-4 on their support documentation

- 3 months of development

- $100K in compute costs

- $200K in engineering time

The launch: Users hated it.

The problems:

- Hallucinated policies that didn’t exist

- Confidently wrong about product features

- Couldn’t access real-time information

- Recommended discontinued products

The post-mortem: The model wasn’t the problem. The product decisions were:

- Fine-tuning was wrong choice: They should have used RAG (Retrieval Augmented Generation)

- No grounding: The AI couldn’t verify its answers against actual data

- No feedback loop: Users couldn’t correct wrong answers

- Wrong use case: They wanted accurate answers, not creative generation

The naked truth: AI product failures are usually product failures, not model failures. The PM didn’t understand the technology well enough to make the right architecture decisions.

This guide will help you avoid that mistake.

What AI Actually Is (And What It Isn’t)

The Simple Definition

AI (Artificial Intelligence) = Systems that can perform tasks that typically require human intelligence.

For product managers, you’re mostly dealing with:

| Type | What It Does | Examples |

|---|---|---|

| LLMs | Generate text, understand language | ChatGPT, Claude, GPT-4 |

| Image Models | Generate/analyze images | DALL-E, Midjourney, Stable Diffusion |

| Embedding Models | Convert text to numbers for similarity | text-embedding-ada-002 |

| Speech Models | Convert speech to text and back | Whisper, TTS systems |

What AI Can Do Well

✅ Generate text (write emails, summarize, translate)

✅ Classify (sentiment, categories, intent)

✅ Extract information (entities, data points)

✅ Answer questions (with right context)

✅ Create variations (rewrite, reformat, adapt)

What AI Can’t Do Well

❌ Guarantee accuracy (hallucinations happen)

❌ Reason deeply (it predicts, doesn’t think)

❌ Access real-time data (training cutoffs exist)

❌ Understand context perfectly (limited by context window)

❌ Be reliable in the same way as code (probabilistic, not deterministic)

The Key Insight

AI is probabilistic. Traditional software is deterministic.

Traditional Software:

Input → Code → Same Output Every Time

AI Software:

Input → Model → Different Output Every Time (Usually similar, but not guaranteed)

This fundamental difference changes everything about how you spec, test, and deploy AI features.

LLMs Demystified: The PM’s Mental Model

What an LLM Actually Is

LLM = Large Language Model = A text predictor trained on lots of text

The mental model: Imagine an auto-complete on steroids.

When you type “The cat sat on the”, an LLM predicts what comes next based on:

- What it’s seen before in training

- The context you’ve provided

- The probability of each possible next word

It doesn’t “understand” anything. It predicts what text should come next.

The Key Concepts for PMs

1. Context Window

The amount of text the model can “see” at once.

| Model | Context Window | What It Means |

|---|---|---|

| GPT-4 Turbo | 128K tokens | ~300 pages of text |

| GPT-4 | 8K tokens | ~20 pages |

| Claude 3 | 200K tokens | ~500 pages |

Product implication: You can pass documents, conversation history, or data within the context window. Larger is better for RAG, but more expensive.

2. Tokens

Text is broken into tokens (roughly 4 characters per token).

Cost calculation:

1,000 tokens ≈ 750 words

GPT-4: $0.03 per 1K input tokens

Cost for 100K words: ~$4

3. Temperature

Controls randomness in output.

| Temperature | Behavior | Use Case |

|---|---|---|

| 0 | Deterministic, consistent | Factual answers, classification |

| 0.5 | Balanced | General use |

| 1.0+ | Creative, varied | Brainstorming, creative writing |

Product implication: Use low temperature for factual tasks, high for creative tasks.

4. Prompt

The instructions you give the model.

Prompt engineering matters. Same model, different prompts = completely different results.

Bad Prompt:

"Write an email about our product"

Good Prompt:

"You are a helpful customer success manager. Write a follow-up email to

a customer who expressed interest in our enterprise plan.

Include:

- Reference to their specific use case (API integration)

- Clear next steps (schedule demo)

- Professional but friendly tone

Keep it under 200 words."

RAG, Fine-Tuning, and When to Use What

The Three Approaches to AI Features

| Approach | What It Is | When to Use |

|---|---|---|

| Prompt Engineering | Craft good prompts | Simple use cases, prototypes |

| RAG | Retrieve relevant data, then generate | When you need accurate, current info |

| Fine-tuning | Train model on your data | When you need specific behavior/style |

RAG (Retrieval Augmented Generation)

What it is: Retrieve relevant documents, pass them to the LLM, then generate a response.

How it works:

User Query

↓

Convert query to embedding (numbers)

↓

Search vector database for similar documents

↓

Pass documents + query to LLM

↓

LLM generates answer based on retrieved documents

↓

Response

When to use RAG:

- Need accurate, up-to-date information

- Want to cite sources

- Information changes frequently

- Need to control what the AI knows

Example: Customer support chatbot that answers based on your actual documentation.

Fine-Tuning

What it is: Train a model on your specific data to change its behavior.

How it works:

Base Model (e.g., GPT-4)

↓

Train on your examples (inputs → desired outputs)

↓

Fine-tuned model that behaves more like you want

When to use fine-tuning:

- Need specific output format consistently

- Want specific style or voice

- Behavior you want can’t be achieved with prompting

- Have lots of training examples (100+ minimum, 1000+ better)

Example: An AI that writes in your company’s brand voice.

Decision Framework

What do you need?

│

├── Accurate, current information?

│ └── YES → RAG

│

├── Specific behavior or style?

│ └── YES → Fine-tuning (or both)

│

├── Simple, one-off task?

│ └── YES → Prompt engineering

│

└── Complex combination?

└── Hybrid: RAG + Fine-tuning + Good prompts

The Cost Comparison

| Approach | Setup Cost | Ongoing Cost | Maintenance |

|---|---|---|---|

| Prompt Engineering | $0 | Per-token | Update prompts |

| RAG | $5K-20K | Per-token + vector DB | Update documents |

| Fine-tuning | $10K-100K | Per-token (sometimes lower) | Retrain periodically |

The AI Feature Development Process

How AI Development Differs from Traditional Development

| Traditional | AI |

|---|---|

| Write code | Design prompts and systems |

| Test outputs | Test behavior across many inputs |

| Debug with logs | Debug with examples |

| Deterministic | Probabilistic |

| Can guarantee behavior | Can only improve probability |

The AI Feature Development Cycle

┌─────────────────────────────────────────────────────────────┐

│ AI FEATURE DEVELOPMENT CYCLE │

├─────────────────────────────────────────────────────────────┤

│ │

│ 1. DEFINE │

│ ├── What should the AI do? │

│ ├── What does "good" look like? │

│ └── What are the failure modes? │

│ │

│ 2. PROTOTYPE │

│ ├── Test with simple prompts │

│ ├── Try different models │

│ └── Measure baseline performance │

│ │

│ 3. ITERATE │

│ ├── Improve prompts │

│ ├── Add RAG if needed │

│ ├── Fine-tune if needed │

│ └── Measure improvement │

│ │

│ 4. EVALUATE │

│ ├── Create test set │

│ ├── Define success metrics │

│ └── Measure against thresholds │

│ │

│ 5. DEPLOY │

│ ├── Monitoring and logging │

│ ├── Feedback mechanisms │

│ └── Iteration plan │

│ │

│ 6. IMPROVE │

│ ├── Collect failure cases │

│ ├── Update system │

│ └── Repeat │

│ │

└─────────────────────────────────────────────────────────────┘

The Timeline Reality

| Phase | Traditional Feature | AI Feature |

|---|---|---|

| Define | 1-2 days | 3-5 days |

| Build | 1-2 weeks | 2-4 weeks |

| Test | 2-3 days | 1-2 weeks |

| Iterate | Optional | Mandatory |

| Total | 2-3 weeks | 4-8 weeks |

AI takes longer because you’re not just building—you’re training and tuning.

Specifying AI Features: The New Requirements

The AI Feature Spec Template

## AI Feature: [Name]

### Problem Statement

[What user problem does this solve?]

### AI Component

**Input:** What goes into the model?

**Output:** What comes out?

**Behavior:** How should it act?

### Examples of Good Behavior

[Provide 5-10 examples of ideal inputs and outputs]

Example 1:

- Input: "How do I reset my password?"

- Output: "To reset your password, click 'Forgot Password' on the login page..."

### Examples of Bad Behavior

[Provide 5-10 examples of what NOT to do]

Example 1:

- Input: "How do I reset my password?"

- Output: "Here's a link to reset: [made-up URL]" (Hallucination)

### Success Metrics

- Accuracy: % of responses that are correct

- Relevance: % of responses that address the question

- User satisfaction: Feedback score

- Latency: P95 response time

### Failure Modes

[What could go wrong?]

- Hallucination: Model makes up information

- Out of scope: Model answers questions it shouldn't

- Inconsistency: Same question, different answers

### Mitigation Strategies

[How do we prevent/handle failures?]

- Grounding: RAG with verified documents

- Guardrails: Prompt constraints

- Feedback: User can flag wrong answers

### Non-AI Components

[What traditional code is needed?]

- Input validation

- Output formatting

- Error handling

- Logging/monitoring

AI Costs: Understanding the Token Economy

The Token Economics

Input tokens: Text you send to the model

Output tokens: Text the model generates

Cost structure:

- Input tokens: ~$0.01-0.03 per 1K tokens

- Output tokens: ~$0.03-0.06 per 1K tokens

Cost Estimation Framework

┌─────────────────────────────────────────────────────────────┐

│ AI COST CALCULATOR │

├─────────────────────────────────────────────────────────────┤

│ │

│ Feature: Customer Support Chatbot │

│ │

│ USAGE ESTIMATES: │

│ ├── Queries per day: 10,000 │

│ ├── Average input tokens: 500 │

│ └── Average output tokens: 200 │

│ │

│ DAILY COST: │

│ ├── Input: 10K × 500 × $0.01/1K = $50 │

│ └── Output: 10K × 200 × $0.03/1K = $60 │

│ Total: $110/day │

│ │

│ MONTHLY COST: ~$3,300 │

│ YEARLY COST: ~$40,000 │

│ │

│ OPTIMIZATION OPTIONS: │

│ ├── Use smaller model for simple queries: -$20K/year │

│ ├── Cache common responses: -$10K/year │

│ └── Optimize prompts (shorter): -$5K/year │

│ │

│ OPTIMIZED YEARLY COST: ~$5,000 │

│ │

└─────────────────────────────────────────────────────────────┘

Cost Optimization Strategies

| Strategy | Savings | Trade-off |

|---|---|---|

| Smaller models | 50-80% | Lower quality for simple tasks |

| Prompt caching | 30-50% | Only works for repeated queries |

| Shorter prompts | 10-30% | May need more examples |

| Batch processing | 20-40% | Not real-time |

| Local models | 80-100% | Higher infra cost, more complexity |

Testing and Quality for AI Features

The AI Testing Challenge

Traditional software: Same input → Same output → Easy to test

AI software: Same input → Different output → Hard to test

The AI Testing Framework

1. Create a Test Set

Test Set: 100+ examples covering:

├── Happy path queries

├── Edge cases

├── Adversarial inputs

├── Out-of-scope questions

└── Multi-turn conversations

2. Define Evaluation Criteria

| Criterion | Definition | How to Measure |

|---|---|---|

| Accuracy | Is the answer correct? | Manual review or fact-checking |

| Relevance | Does it address the question? | Human rating or classifier |

| Safety | Is it harmful? | Safety classifier + manual review |

| Tone | Is it appropriate? | Human rating |

| Conciseness | Is it appropriately brief? | Word count + human rating |

3. Run Evaluation

For each test case:

├── Run through system

├── Evaluate against criteria

├── Record pass/fail

└── Identify failure patterns

4. Iterate

Identify failure patterns

↓

Update prompts/system

↓

Re-evaluate

↓

Repeat until pass rate > threshold

The Quality Threshold

| Feature Type | Minimum Pass Rate |

|---|---|

| Internal tool | 70% |

| Customer-facing | 85% |

| High-stakes (medical, legal) | 95%+ |

Common AI Product Mistakes

Mistake 1: Expecting Determinism

What happens: You expect the AI to give the same answer every time.

The reality: AI is probabilistic. Same question can yield different answers.

The fix: Design for variability. Set expectations with users. Test with multiple runs.

Mistake 2: Ignoring Hallucinations

What happens: You ship an AI that confidently makes things up.

The reality: All LLMs hallucinate sometimes. This isn’t a bug—it’s a feature of how they work.

The fix: Ground responses in real data (RAG). Show sources. Let users verify.

Mistake 3: No Feedback Mechanism

What happens: You don’t know when the AI fails.

The reality: Users experience failures silently. You never learn or improve.

The fix: Thumbs up/down, report issues, collect examples.

Mistake 4: Wrong Architecture Choice

What happens: You fine-tune when you should RAG. Or vice versa.

The reality: Architecture choice determines success.

The fix: Use the decision framework earlier in this guide.

Mistake 5: No Cost Controls

What happens: Your AI feature becomes unexpectedly expensive.

The reality: AI costs scale with usage. No controls = budget surprise.

The fix: Set usage limits. Monitor costs. Have optimization plan.

Building Your AI Product Muscles

What to Learn

Week 1: Fundamentals

- Use ChatGPT and Claude extensively

- Understand prompting

- Try different temperatures

Week 2: Technical Concepts

- Learn about embeddings

- Understand RAG

- Explore tokenization

Week 3: Hands-On

- Build a simple RAG system

- Experiment with prompt engineering

- Test different models

Week 4: Strategic

- Analyze AI costs for your use case

- Build an AI feature spec

- Present to stakeholders

Resources for PMs

Non-Technical:

- “Co-Intelligence” by Ethan Mollick

- “The AI Playbook” courses

- OpenAI Cookbook (practical examples)

Semi-Technical:

- “Designing Machine Learning Systems” (Chip Huyen)

- Andrej Karpathy’s “Intro to LLMs” YouTube

- LangChain documentation

Questions to Ask Your AI/Engineering Team

About the Approach

- “Are we using RAG or fine-tuning? Why?”

- “What model are we using? Why that one?”

- “How do we handle hallucinations?”

About Quality

- “How do we test this feature?”

- “What’s our accuracy on the test set?”

- “What are the known failure modes?”

About Cost

- “What’s the cost per query?”

- “How does cost scale with usage?”

- “What’s our optimization plan?”

About Deployment

- “How do we monitor performance?”

- “How do we gather user feedback?”

- “What’s our iteration plan?”

The Bottom Line

AI product management is product management—with new tools and new trade-offs.

You need to understand:

- What AI can and can’t do

- The different approaches (RAG vs. fine-tuning)

- How to specify AI behavior

- How to measure AI quality

- How to manage AI costs

You don’t need to:

- Implement models yourself

- Understand the math

- Build the infrastructure

The shift: When you understand AI technically enough to make good product decisions, you become the PM who can actually ship AI features that work.

Start today: Use AI tools extensively. Build something small. Learn by doing.

What AI feature are you building? What’s your biggest challenge?

Related Reading:

💬 Join the Conversation